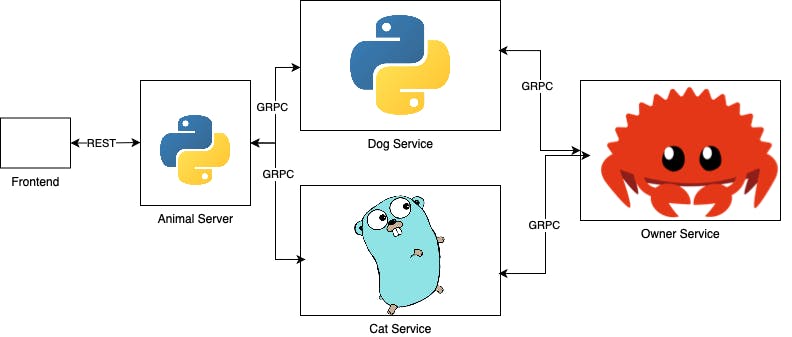

In the previous part we got to see gRPC in action. We had a problem where we needed to create 3 microservices (Cat, Dog, Owner) communicating with each other via gRPC and an API gateway (Unicorn) that communicate with the microservices in gRPC while communicating with the client via Rest.

We decided to use Rust to create the Owner microservice, while using Go to create the Cat microservice, and Python for both the API gateway that would be a simple Flask application and the Dog microservice.

In the previous part Beyond Rest: gRPC in Microservices Part I We wrote both the Rust Owner microservice and the Cat Go microservice.

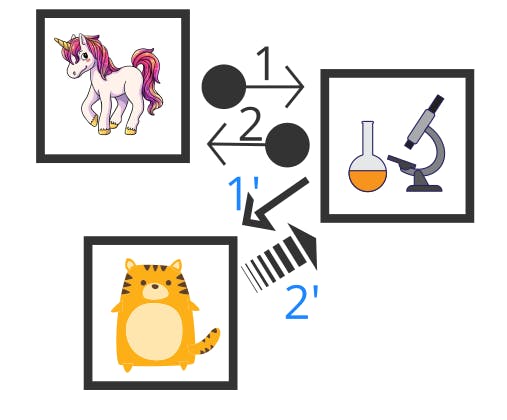

In this part we will continue by writing both the Dog microservice and the API Gateway server which will route to our microservices. Then we will time-travel to the future where a group of scientist are trying to analyze what cats are saying. And to do that, they need to communicate with our Cat microservice, and stream what cats are saying. Since sound and video data are too big to be sent in a single request, they are streamed from the Cat microservice to the scientist new microservice that analyze these data.

And here where gRPC actually shines. In my humble opinion, gRPC solves a lot of problems that I faced with Rest. To name a few:

- With gRPC, I don't need to use a third-party library like Swagger to explain my APIs. All the APIs are documented in the Proto file.

- Since Rest transfers JSON text (Most of the time) and gRPC transfers binaries, gRPC tends to be faster and lighter than Rest.

- But above all, with gRPC, I don't need to use a new protocol to stream data when I need to like I do in Rest. Because gRPC is built on HTTP/2 I can directly use it's streaming feature to stream from server to client, client to server or even bi-stream between client and server.

So let's put in points what are we going to do

- Build our Dog microservice in Python.

- Build our API Gateway in Flask.

- Build our Cat-Analysis microservice in Go (Because we will do some asynchronous work, we will get to that later).

If you only care about streaming you can skip the first two parts, as they are continuation for the previous part.

Create our Dog microservice

First thing we need to create environment to work in. Create requirements.txt and put the required packages in it.

grpcio

grpcio-tools

Create an environment using

python3 -m venv env

Then activate this environment by

source env/bin/activate

Install the needed packages

python3 -m pip install -r requirements.txt

And now we're ready to start.

As all gRPC services, we need to create a Server to serve the data and a Client to communicate with the Owner Service and notify the owners that their Dog is going to play.

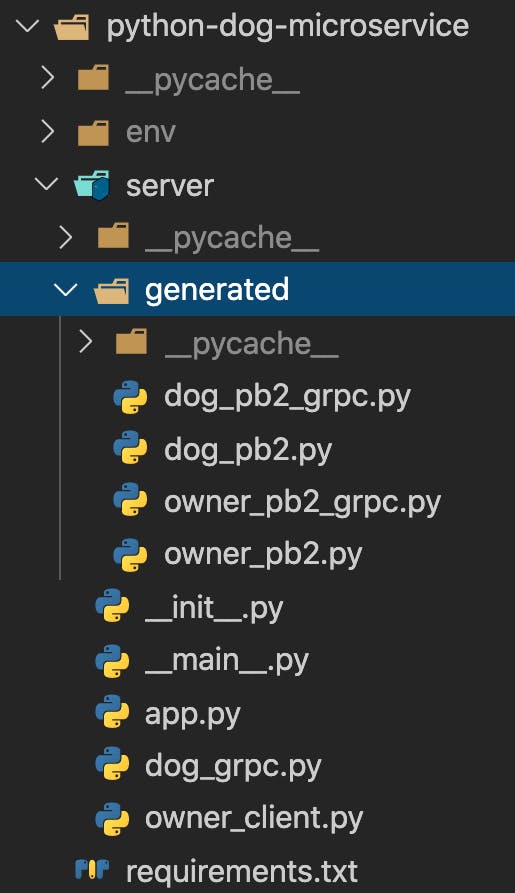

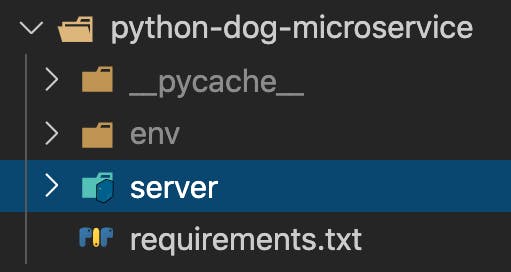

We will need also to create a directory for our generated files. It's always a good idea to create all that in a module. So after we finish your folder structure should look like this.

cd to your service directory and generate the dog and owner grpc files

cd python-dog-microservice

env/bin/python -m grpc_tools.protoc -I../proto --python_out=./server/generated --grpc_python_out=./server/generated ../proto/dog.proto

env/bin/python -m grpc_tools.protoc -I../proto --python_out=./server/generated --grpc_python_out=./server/generated ../proto/owner.proto

Since I decided to save the generated files in a generated directory, I have to change something in the *_pb2_grpc.py files because if I used it like that it will fail. There's a lot of issues raised for the problem here.

Simply, we need to change these line in dog_pb2_grpc.py:

import dog_pb2 as dog__pb2

to this

from . import dog_pb2 as dog__pb2

You need to do the same in owner_pb2_grpc.py too. This makes Python understands that the file it tries to import exists in the current folder, not the base folder.

I've always found it simpler to create Clients first. Create a file called owner_client.py where we will create our GrpcOwnerClient class so we can use it directly in the server and avoid creating multiple clients whenever we communicate with the owner-microservice

import grpc

from .generated import owner_pb2, owner_pb2_grpc

class GrpcOwnerClient:

def __init__(self):

self._channel = grpc.insecure_channel('localhost:50051')

self._stub = owner_pb2_grpc.OwnerStub(self._channel)

def notify_owner(self, dog_name):

req = owner_pb2.NotifyRequest(animalName=dog_name, animalType="Dog")

res = self._stub.Notify(req)

return res

def close(self):

self._channel.close()

We save the _channel (Where we configure which IP and Port to speak to) and _stub (Which is our client interface that allows us to send/receive messages) states to our object and we create a notify_owner method which we will call whenever we need to communicate with the owner-microservice.

Next we create a file called dog_grpc.py where we will create our Dog Class, it will inherent the dog_pb2_grpc.dogService. And will be our dog service implementation.

from .generated import dog_pb2, dog_pb2_grpc

from .owner_client import GrpcOwnerClient

class Dog(dog_pb2_grpc.dogServicer):

def __init__(self):

self.owner_client = GrpcOwnerClient()

def CallDog(self, request, context):

dog_name = request.dogName

res = f"{dog_name} is going to bark!"

print(self.owner_client.notify_owner(dog_name))

print(res)

return dog_pb2.DogResponse(dogBark=res)

In the initialize we created the owner_client which we use to notify the owner of the dog_name and receives a confirmation message from the previously implemented Rust Owner-microservic. All this happens in the CallDog implementation.

Next, we need to run our server, to do so, we will create our app.py file which will contain our Server Class.

from concurrent import futures

import grpc

from .generated import dog_pb2_grpc

from .dog_grpc import Dog

class Server:

@staticmethod

def run():

server = grpc.server(futures.ThreadPoolExecutor(max_workers=10))

dog_pb2_grpc.add_dogServicer_to_server(Dog(), server)

server.add_insecure_port('[::]:50055')

print("Dog Server is running")

server.start()

server.wait_for_termination()

To run all this in the server module, you need to create a main.py file

from .app import Server

if __name__ == '__main__':

Server.run()

From the service base directory you can run the server using

env/bin/python -m server

Build our API Gateway in Flask.

This should be straight forward. We will create a flask server that has two APIs ( /cat/:cat_name, /dog/:dog_name)

We will create a dog client and a cat client. We won't go fancy in structuring this server. If you've followed till now, I urge you to try to create Client Classes (Like we did in the previous service) and use Restful library as an exercise.

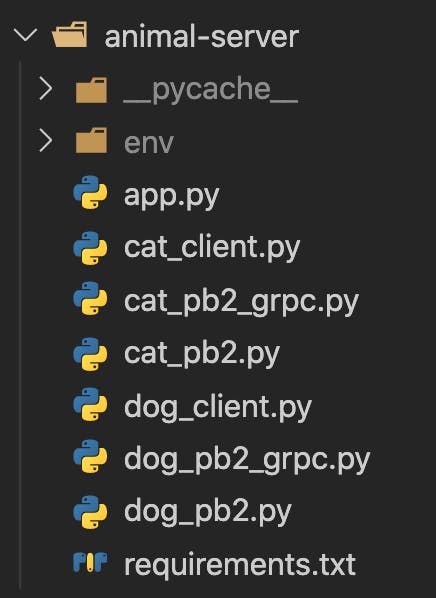

First create an environment the same way we did with the previous service. A requirements.txt file and an app.py file. We also will create a file for the dog_client and another for the cat_client. After generating the proto files using

env/bin/python -m grpc_tools.protoc -I../proto --python_out=. --grpc_python_out=. ../proto/cat.proto

env/bin/python -m grpc_tools.protoc -I../proto --python_out=. --grpc_python_out=. ../proto/dog.proto

You will find your folder-structure look like this

It's much better to create a class and save the channel and stub states in it, but as stated previously I'm leaving this as an exercise. Just follow the steps we did in the previous service. For now for this tutorial, we will only create a function that does all that in cat_client.py

import grpc

import cat_pb2

import cat_pb2_grpc

def call_cat(cat_name):

channel = grpc.insecure_channel('localhost:50056')

stub = cat_pb2_grpc.catStub(channel)

req = cat_pb2.CatRequest(catName=cat_name)

response = stub.CallCat(req)

return response

and in dog_client.py

import grpc

import dog_pb2

import dog_pb2_grpc

def call_dog(dog_name):

channel = grpc.insecure_channel('localhost:50055')

stub = dog_pb2_grpc.dogStub(channel)

req = dog_pb2.DogRequest(dogName=dog_name)

response = stub.CallDog(req)

return response

In the app.py file we need to expose our APIs and run the server.

from cat_client import call_cat

from dog_client import call_dog

from flask import Flask, jsonify

from google.protobuf.json_format import MessageToDict

app = Flask(__name__)

@app.route('/cat/<string:cat_name>')

def get_cat_meaw(cat_name):

response = call_cat(cat_name)

return jsonify(MessageToDict(response))

@app.route('/dog/<string:dog_name>')

def get_dog_barking(dog_name):

response = call_dog(dog_name)

return jsonify(MessageToDict(response))

app.run()

It's straight-forward, when we call the API, we use the clients methods to communicate with our services via gRPC, and after receiving the response, we transform it to Json so it can return to the front-end.

You can use env/bin/flask run to run the Flask Server.

Build our Cat-Analysis microservice in Go

Now that our project is completed, we got a new feature-request to implement a new service called cat-analysis-service. This receives an analysis request from the API Gateway telling it which cat needs to be analyzed, it responds to the API Gateway that it will analyze the cat, and asynchronously it asks the Cat-microservice to stream the cat meaws.

First we will create this service proto file. Create a catAnalysis.proto in the proto folder.

syntax = "proto3";

package proto;

message AnalysisRequest {

string catName = 1;

}

message AnalysisResponse {

string result = 1;

}

service catAnalysis {

rpc AnalyzeSound (AnalysisRequest) returns (AnalysisResponse) {}

}

Then we will modify the cat.proto file with the new API

syntax = "proto3";

package proto;

message CatRequest {

string catName = 1;

}

message CatResponse {

string catMeaw = 1;

}

message AnalyzeCatSoundRequest {

string catName = 1;

}

message AnalyzeCatSoundResponse {

string result = 1;

}

service cat {

rpc CallCat (CatRequest) returns (CatResponse);

rpc AnalyzeCatSound (AnalyzeCatSoundRequest) returns (stream AnalyzeCatSoundResponse) {}

}

the word stream means the response will be streamed and won't be there at once.

It's very important to understand that the Cat-microservice will be the server streaming the data and the Cat-Analysis-microservice will be the client receiving this data.

Let's go back to the Cat-microservice to implement our streaming service.

In the server.go we will add a new method called AnalyzeCatSound.

Streaming the data via gRPC is as simple as:

func (s *Server) AnalyzeCatSound(request *proto.AnalyzeCatSoundRequest, stream proto.Cat_AnalyzeCatSoundServer) error {

for i := 0; i < 30; i++ {

time.Sleep(time.Duration(1) * time.Second)

res := &proto.AnalyzeCatSoundResponse{

Result: fmt.Sprintf("%s Meaw #%d", request.GetCatName(), i),

}

fmt.Println(fmt.Sprintf("%s: Meaw #%d", request.GetCatName(), i))

if err := stream.Send(res); err != nil {

return err

}

}

return nil

}

Here, we loop for 30 times, each time we wait for a second and then send to the client a meaw of the requested cat so it be analyzed. This tries to mock a real behavior when you have large data like audio or video that you need to stream by chunks.

The stream.Send(res) is the part that sends the data to the client. We expect whenever a cat meaw the client will receive it immediately.

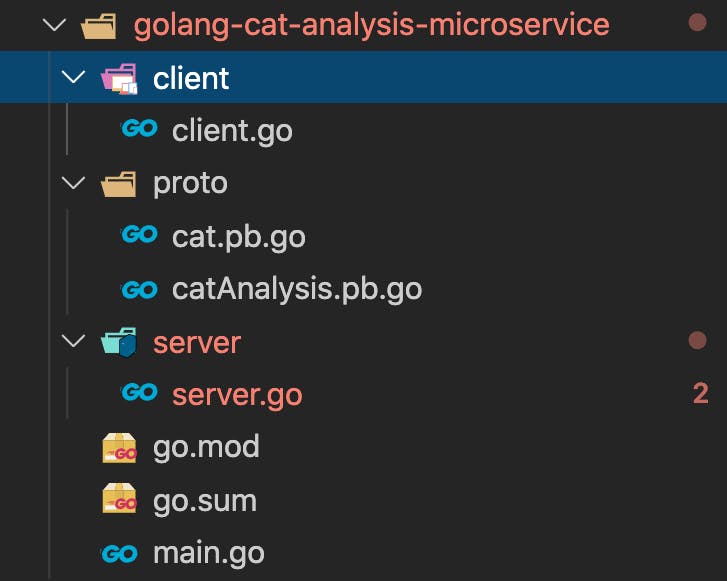

We will create a new folder for our service called golang-cat-analysis-microservice.

We follow the steps we did before in creating Client and Server and Proto packages.

The folder-structure should look like this.

I won't go into the details of creating a catClient because it will be the very same steps we did when we created the ownerClient before in the Cat-microservice.

Here we only need to focus on the server to check the implementation of AnalyzeSound.

package server

import (

"context"

"fmt"

c "golang-cat-analysis-microservice/client"

"golang-cat-analysis-microservice/proto"

"io"

"math/rand"

"time"

"google.golang.org/grpc/metadata"

)

type Server struct {

cc proto.CatClient

}

func NewCatAnalysisService(ctx context.Context) *Server {

cc := c.NewCatClient(ctx)

return &Server{

cc: cc.Client,

}

}

func (s *Server) AnalyzeSound(ctx context.Context, request *proto.AnalysisRequest) (*proto.AnalysisResponse, error) {

md, _ := metadata.FromIncomingContext(ctx)

newCtx := metadata.NewOutgoingContext(context.Background(), md)

go func() {

s.BackgroundAnalysis(newCtx, request)

}()

return &proto.AnalysisResponse{

Result: fmt.Sprintf("Analyzing %s ...", request.GetCatName()),

}, nil

}

func (s *Server) BackgroundAnalysis(ctx context.Context, request *proto.AnalysisRequest) {

streamRequest := &proto.AnalyzeCatSoundRequest{

CatName: request.GetCatName(),

}

stream, err := s.cc.AnalyzeCatSound(ctx, streamRequest)

if err != nil {

fmt.Println(err)

}

for {

meaw, err := stream.Recv()

if err == io.EOF {

break

}

if err != nil {

fmt.Println(err)

}

fmt.Println(meaw)

}

fmt.Println("Analyzing ... ")

rand.Seed(time.Now().Unix())

analysisResults := []string{

"Smelly Cat",

"Good Cat",

"Bad Cat",

"Hungry Cat",

}

catAnalysisResult := rand.Int() % len(analysisResults)

res := fmt.Sprintf("%s is %s", request.GetCatName(), analysisResults[catAnalysisResult])

fmt.Println(res)

}

Let's break that, first the AnalyzeSound method

func (s *Server) AnalyzeSound(ctx context.Context, request *proto.AnalysisRequest) (*proto.AnalysisResponse, error) {

md, _ := metadata.FromIncomingContext(ctx)

newCtx := metadata.NewOutgoingContext(context.Background(), md)

go func() {

s.BackgroundAnalysis(newCtx, request)

}()

return &proto.AnalysisResponse{

Result: fmt.Sprintf("Analyzing %s ...", request.GetCatName()),

}, nil

}

This method accepts AnalysisRequest then it creates new outgoing context since we will run something asynchronously. Then it returns a proto.AnalysisResponse to the user telling them we're analyzing the cat now.

In asynchronous task BackgroundAnalysis we communicate to the Cat-microservice sending them the cat name, and receive stream of it's meaw.

func (s *Server) BackgroundAnalysis(ctx context.Context, request *proto.AnalysisRequest) {

streamRequest := &proto.AnalyzeCatSoundRequest{

CatName: request.GetCatName(),

}

stream, err := s.cc.AnalyzeCatSound(ctx, streamRequest)

if err != nil {

fmt.Println(err)

}

for {

meaw, err := stream.Recv()

if err == io.EOF {

break

}

if err != nil {

fmt.Println(err)

}

fmt.Println(meaw)

}

fmt.Println("Analyzing ... ")

rand.Seed(time.Now().Unix())

analysisResults := []string{

"Smelly Cat",

"Good Cat",

"Bad Cat",

"Hungry Cat",

}

catAnalysisResult := rand.Int() % len(analysisResults)

res := fmt.Sprintf("%s is %s", request.GetCatName(), analysisResults[catAnalysisResult])

fmt.Println(res)

}

Eventually we print the analysis result, which is a random result from a slice. In real-world scenario you probably are going to save the result of a real-world analysis in a database.

The last step we should generate the proto files to the Cat-microservice and animal-server.

So it might be a good idea to create a sh to generate that for us.

In the project base directory I will create generate-proto.sh

cd golang-cat-microservice

protoc -I=../proto --go_out=plugins=grpc:./proto ../proto/owner.proto

protoc -I=../proto --go_out=plugins=grpc:./proto ../proto/cat.proto

cd ..

cd python-dog-microservice

env/bin/python -m grpc_tools.protoc -I../proto --python_out=./server/generated --grpc_python_out=./server/generated ../proto/dog.proto

env/bin/python -m grpc_tools.protoc -I../proto --python_out=./server/generated --grpc_python_out=./server/generated ../proto/owner.proto

cd ..

cd animal-server

env/bin/python -m grpc_tools.protoc -I../proto --python_out=. --grpc_python_out=. ../proto/cat.proto

env/bin/python -m grpc_tools.protoc -I../proto --python_out=. --grpc_python_out=. ../proto/dog.proto

env/bin/python -m grpc_tools.protoc -I../proto --python_out=. --grpc_python_out=. ../proto/catAnalysis.proto

cd ..

cd golang-cat-analysis-microservice

protoc -I=../proto --go_out=plugins=grpc:./proto ../proto/cat.proto

protoc -I=../proto --go_out=plugins=grpc:./proto ../proto/catAnalysis.proto

cd ..

I will also create a catAnalysis.py file in the animal-server

import grpc

import catAnalysis_pb2

import catAnalysis_pb2_grpc

def analyze_cat(cat_name):

channel = grpc.insecure_channel('localhost:50057')

stub = catAnalysis_pb2_grpc.catAnalysisStub(channel)

req = catAnalysis_pb2.AnalysisRequest(catName=cat_name)

response = stub.AnalyzeSound(req)

return response

And add the API to the app.py

@app.route('/analyze/<string:cat_name>')

def get_cat_analysis(cat_name):

response = analyze_cat(cat_name)

return jsonify(MessageToDict(response))

Now let's see the whole system working together.

Summary

In these series (Part I and Part II) we tried to demonstrate how to build microservices without languages restrictions. We worked with Go, Python and Rust. We used gRPC for services communications and we demonstrated it's pros. We demonstrated the simplicity of adding new features in microservices architecture, and the streaming features in gRPC.

I hope these series would make you try to use gRPC as it's really good and underrated architecture that should be used more often.

All the code of this series can be found here.